About this project

Not every task on the server needs an expensive model. A lot of the work is generic and simple, and a smaller local model handles it fine. aiRelay is the thing in the middle. Anything that needs a model for that class of task calls aiRelay, and aiRelay forwards it to whichever local model I have picked.

The reason it exists is practical: I want one switch. When I want to try a different local model for generic work, I change it once in aiRelay and every downstream project picks it up. Before this I had to go into each project one by one, which got tedious fast.

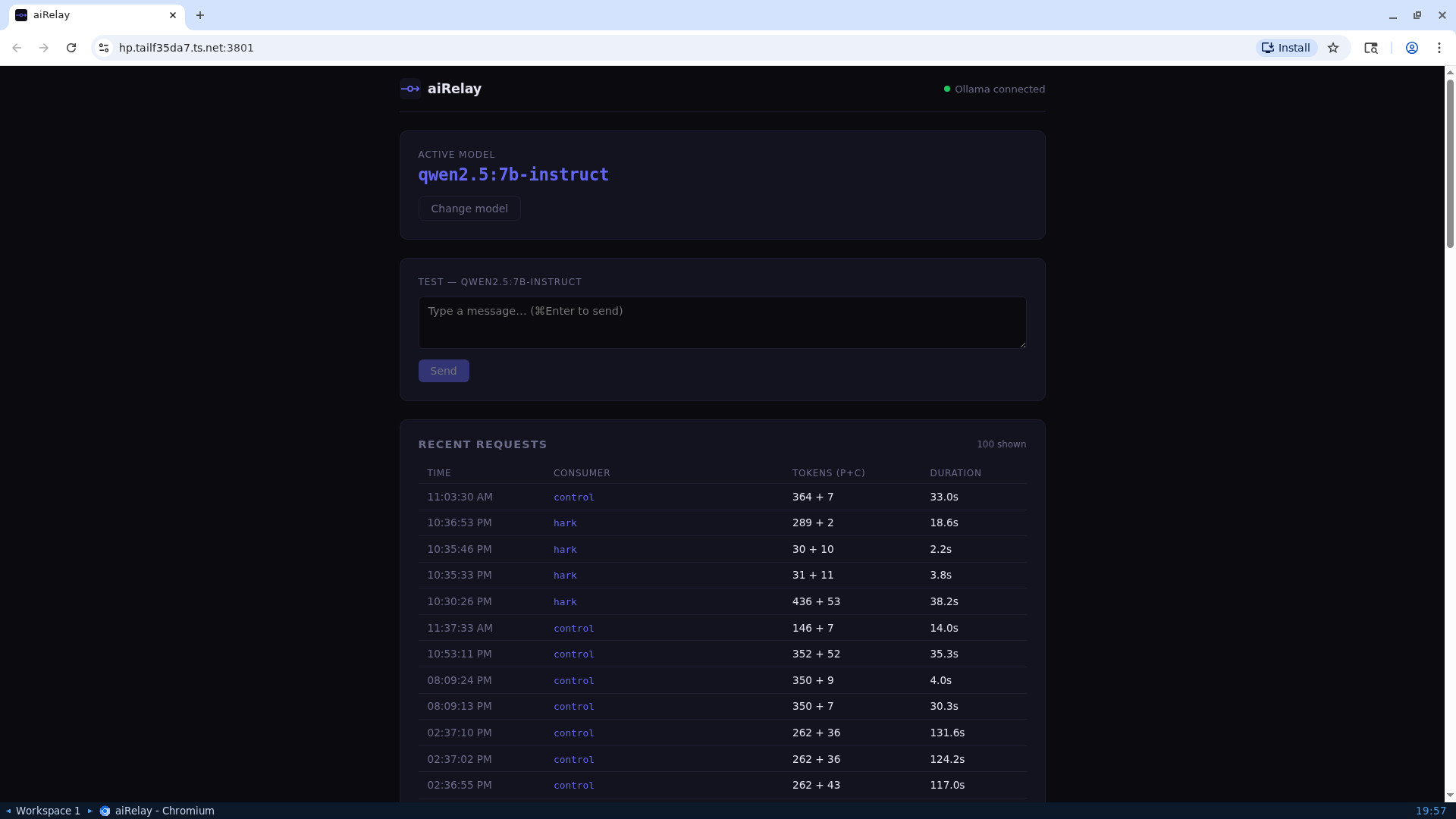

It is not clever. No load balancing, no failover, no caching. There is some logging which I mostly use for debugging my own bots, and a small UI I occasionally open to try ad hoc queries against whichever model is currently selected. That UI has turned out to be more handy than I expected.

Screenshots