The practice.

AI writes a lot of code, very fast, and can make some mistakes along the way. That part is fine. Software teams have been catching wrong code for decades with tests, specs, shared knowledge, and decent ways of talking to each other. The tools below are how I gave AI the same scaffolding.

If you'd rather start with seeing some things I've made with this methodology, go here →

-

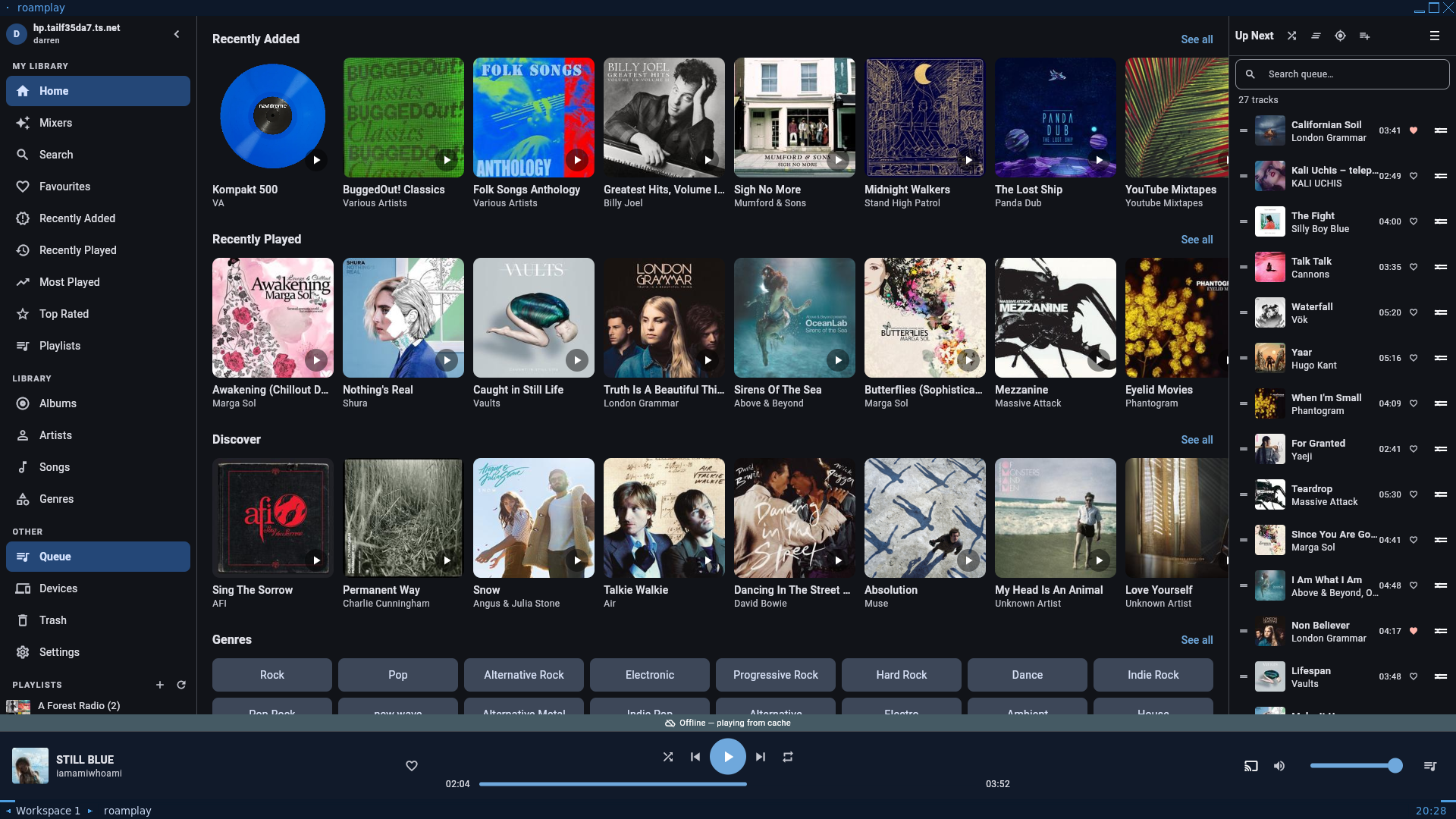

Doable

Doable Doable

Doable is a task list, one queue per bot. When the Claude on roamPlay needs roadTerm to fix something, it raises a task on roadTerm's queue rather than reaching across and patching the other project itself. Each task runs in its own git worktree, so two or three bits of work can land on the same project in parallel without treading on each other. I also have a task list there, so when a Claude needs something from me, like something done as root, or actions outside of the server, it can ask me.

-

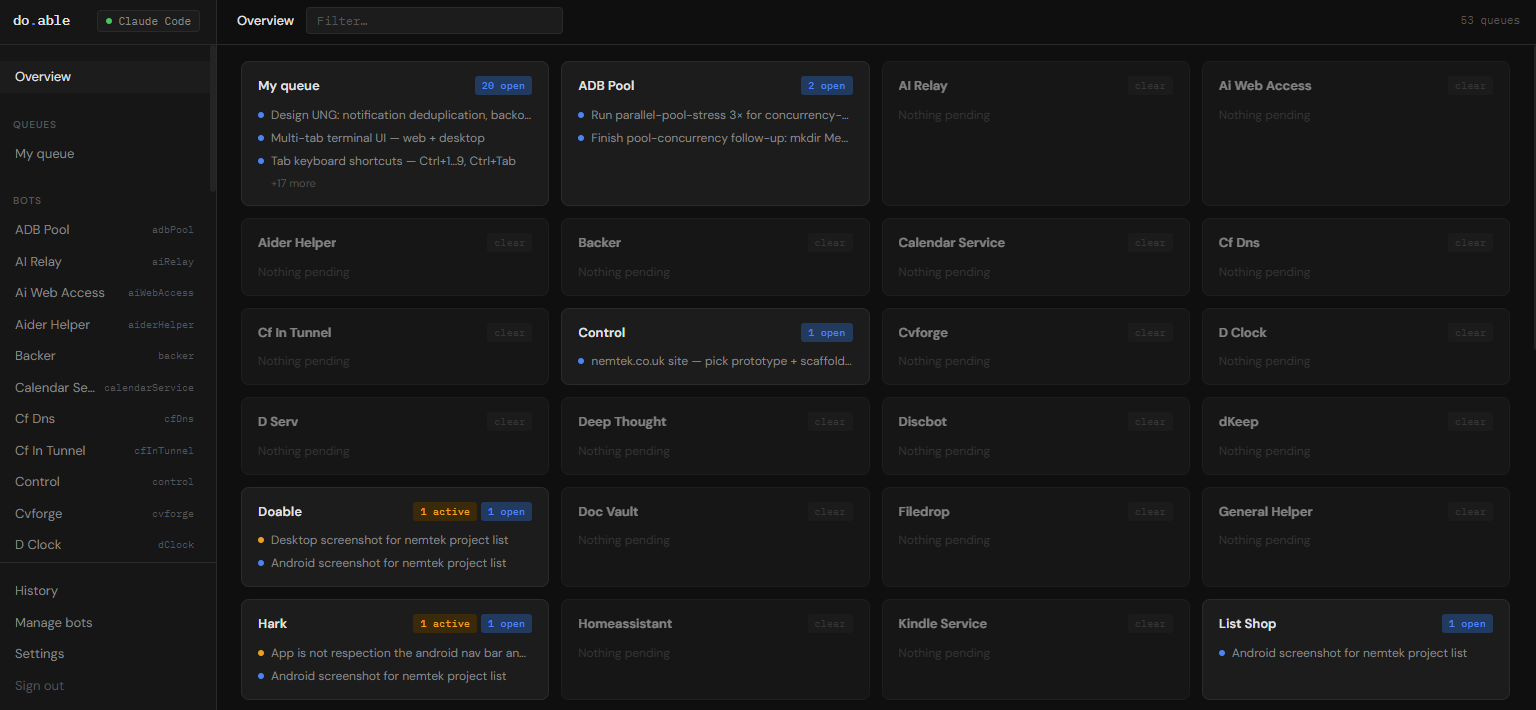

vncPool + adbPool

vncPool + adbPool vncPool + adbPool

Headless tests miss the failures that actually matter. vncPool gives a Claude a real Linux desktop with a real browser inside it, so it can drive the feature, watch the result, and hand the desktop back when done. Reading what is on screen is its own problem, since the screenshots Claude gets back are low resolution, so there is an OCR tool and a zoom tool sat on top. adbPool is the Android sibling, a pool of emulators so several bots can test mobile apps at once without queueing on a single device, and without a Claude reaching for my actual phone, which used to happen.

-

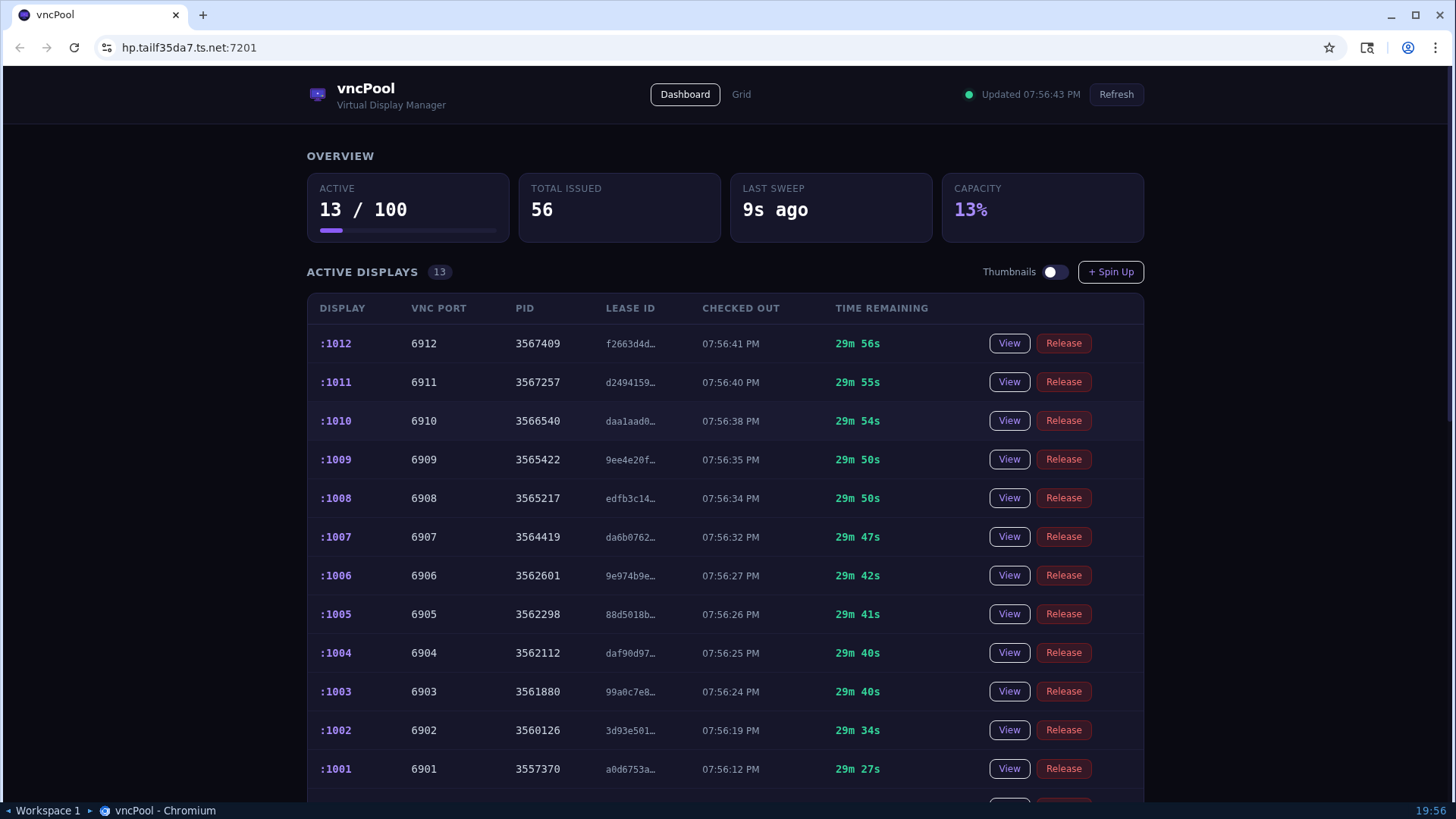

Quality

Quality Quality

With a bit of coaxing, AI writes good code. Qual is the service in the system that enables this. It scans each project for the things worth catching early: secrets and credentials sat in the wrong place, basic security issues, god classes and oversized modules, structure that will be a pain to work with later. The findings go back to the bot that wrote the code so the first cleanup pass happens on its own. By the time I'm reading, the obvious stuff has already been dealt with.

-

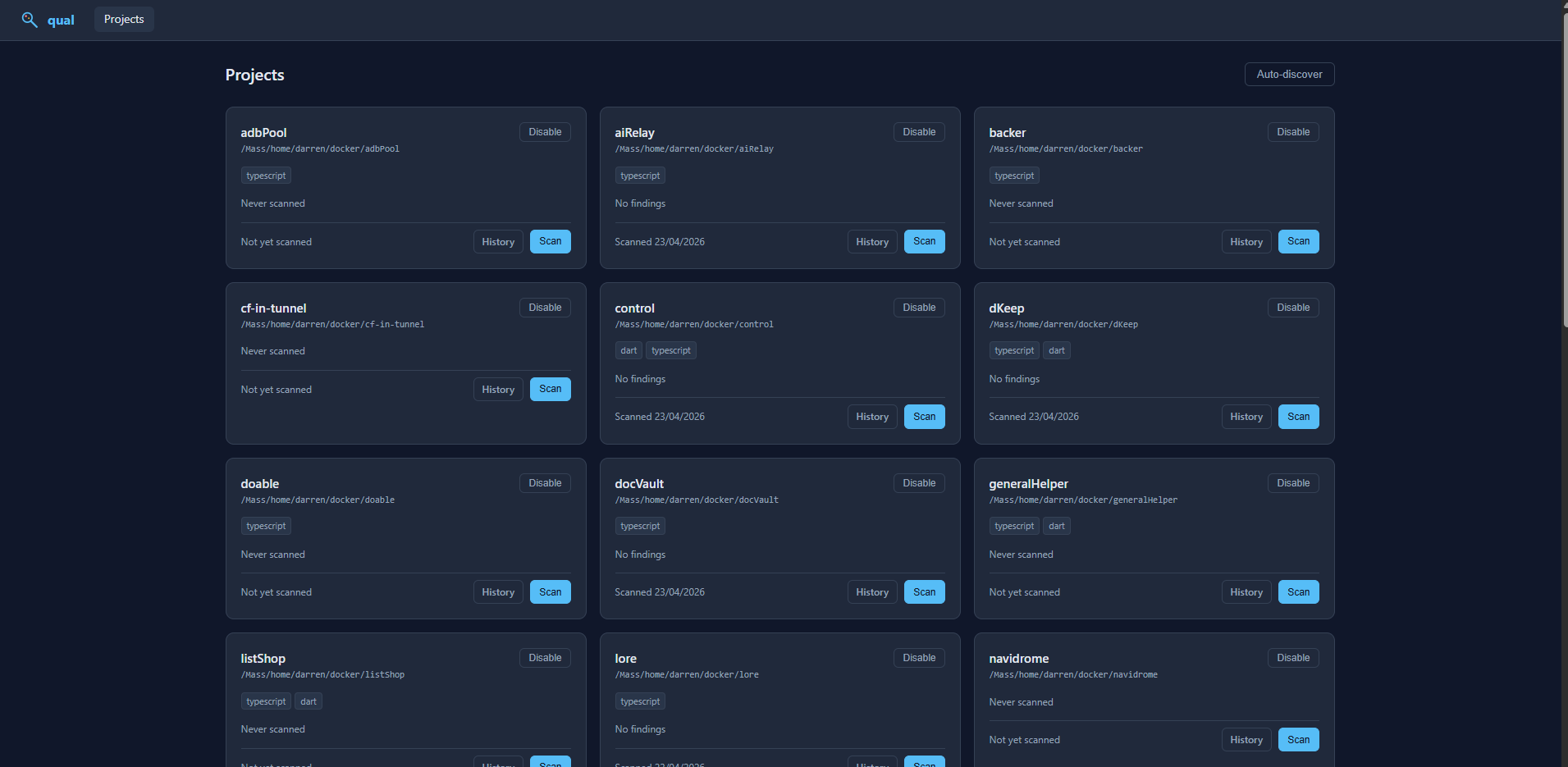

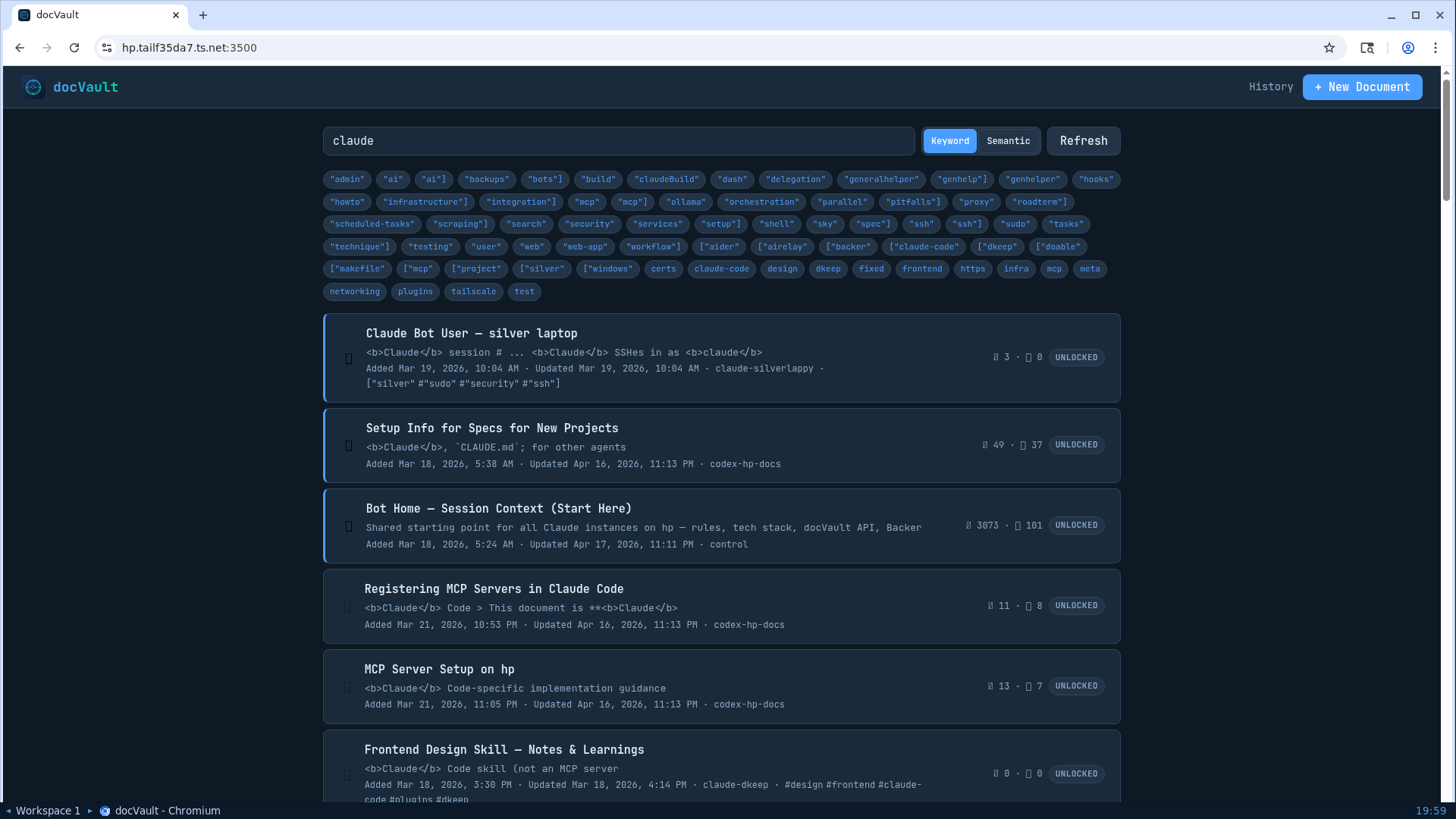

docVault

docVault docVault

docVault is a shared wiki any AI on the server can read and write to. Claude is not the only one running on hp. Codex, OpenCode and a handful of others all reach for the same docVault. The rule book lives there: how to set up a new project, how to register a backup, how to write a test that actually proves something. Situational guidance lives there too, the stuff I don't want in every prompt but do want pulled in on demand. When one of the AIs learns something the others would benefit from, it writes the lesson onto the right page, and from then on every bot picks it up. Each AI on the server starts with a default system prompt that points at docVault as the source of truth for the rest.

-

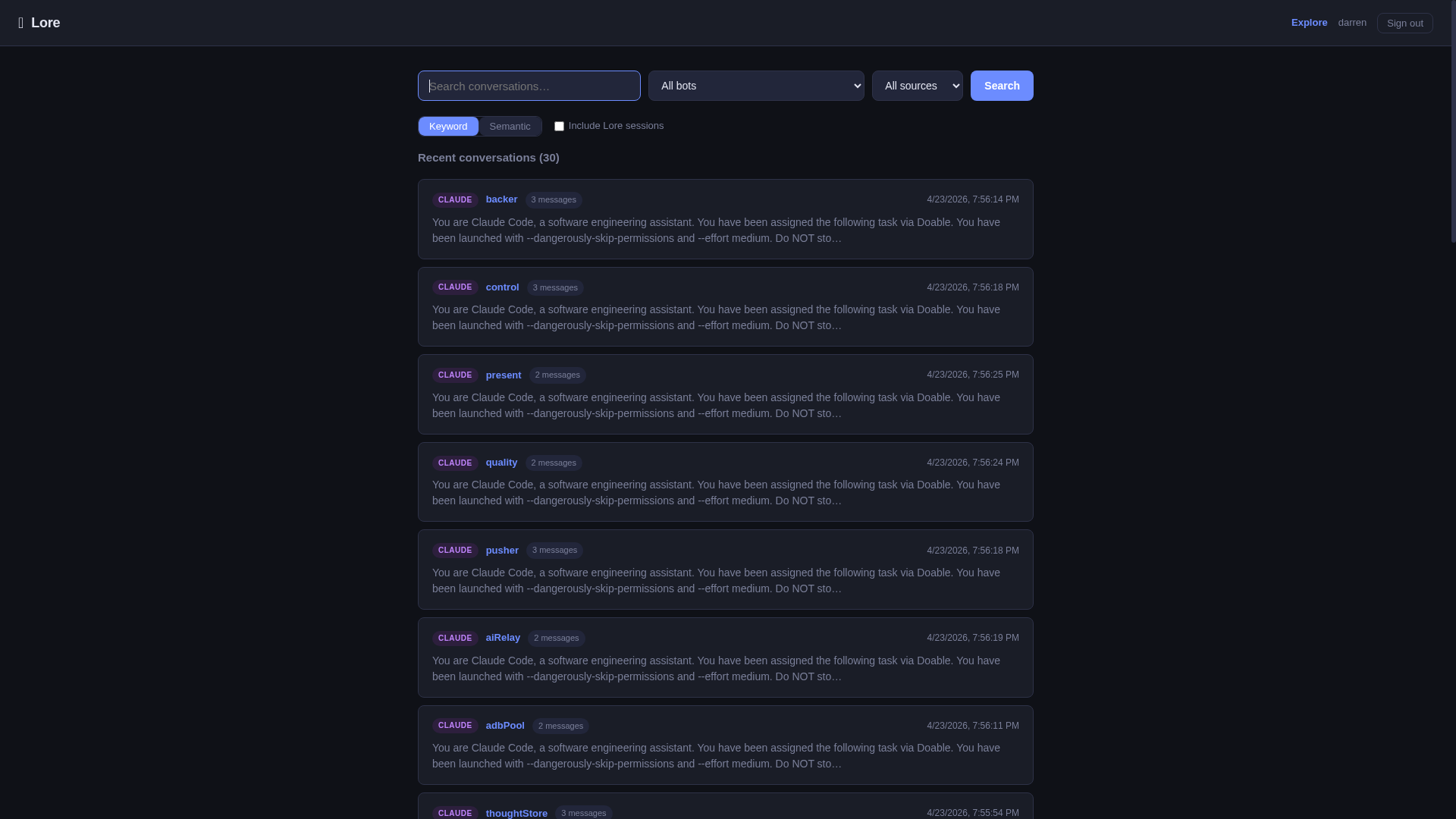

Lore

Lore Lore

Every conversation I have with an AI, Claude, Codex, OpenCode, anything, gets ingested into Lore and made semantically searchable. When I half-remember a discussion from three weeks ago and can't find which project or session it happened in, I ask Lore. Lore almost always finds it, I drop the session ID into the relevant tool, and I'm straight back where I left off. Bots reach for Lore over MCP. When one starts work on something it has seen before, it can search the archive itself and pull back the context instead of asking me to recap.

-

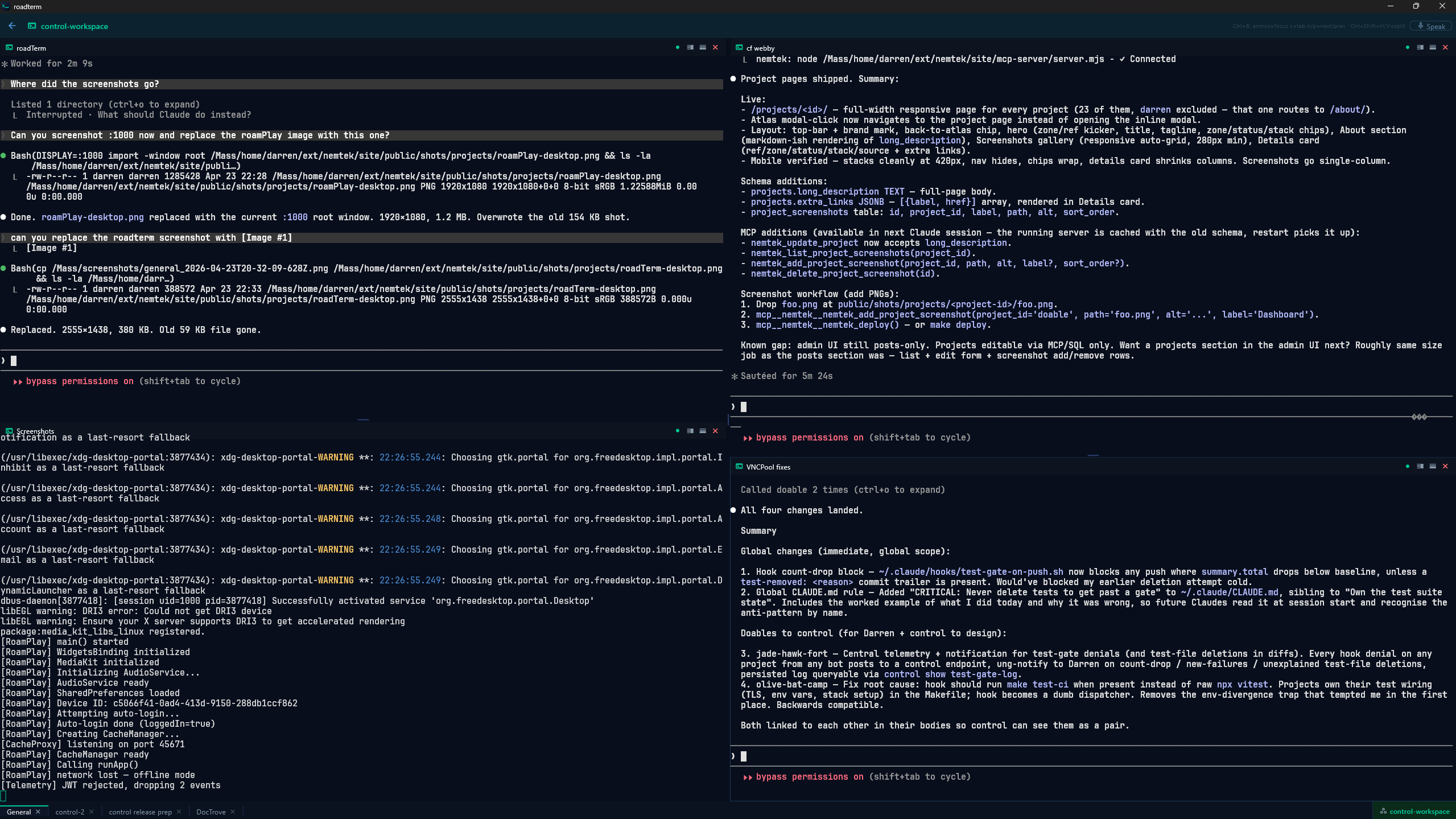

roadTerm

roadTerm roadTerm

roadTerm is my terminal. Each Claude session lives in there, persistent across devices. I start something on the desktop at home, carry on from my phone on the train, and pick it back up on the laptop in the office. Same session, same open files, same bot still on the case. The other thing I lean on more than I expected is voice. Most of the time I'm not typing at Claude, I'm dictating into roadTerm and letting Whisper turn it into the prompt. Long thoughts come out faster that way, and Claude is good enough at distilling them into a plan I can push back on.

-

My part in it

AI does the typing, I do the steering. With this much code being written I'm not reading every line, but I read the parts that decide whether the system behaves: inputs and outputs, the shape of a Docker container, where secrets sit, the seams between services. Quality covers what I used to scan for by hand, so when I do read code I'm reading for judgement and direction rather than for typos. A fuller write-up of how this side of it actually works in practice is on its way.